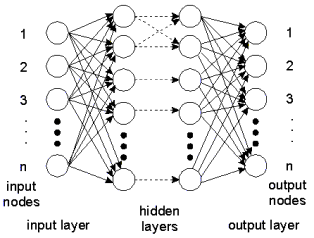

In backpropagation type neural networks, the neurons or "nodes" are grouped in layers. We can distinct three groups of layers in a backpropagation type neural network: one input layer, one or more hidden layers and one output layer. The nodes between two adjacent layers are interconnected. Fully connected networks occur when each node of a layer is connected with each node of the adjacent layer.

As the figure shows, information (i.e. input signals) enters the network through the input layer. The sole purpose of the input layer is to distribute the information to the hidden layers. The nodes of the hidden layers do the actual processing. The processed information is captured by the nodes of the output layer, and transported as output signals to the world outside.

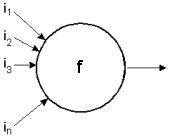

y= f ( w1*i1 + w2*i2 + w3*i3 + ... + wn*in )

The nodes of the hidden layers process information by applying factors (weights) to each input. This is shown in the figure above. The sum of the weighted input information (S) is applied to an output function. The result is distributed to the nodes of the next layer.

When the network is in being trained, the weights of each node are adapted according to the backproagation paradigm. When the network is in operation, the weights are constant.

Depending on the kind neural network, various topologies can be discerned. The 20-sim Neural Network Editor supports two well-known networks:

| 1. | Adaptive B-spline networks |

| 2. | Multi-layer Perceptron networks |